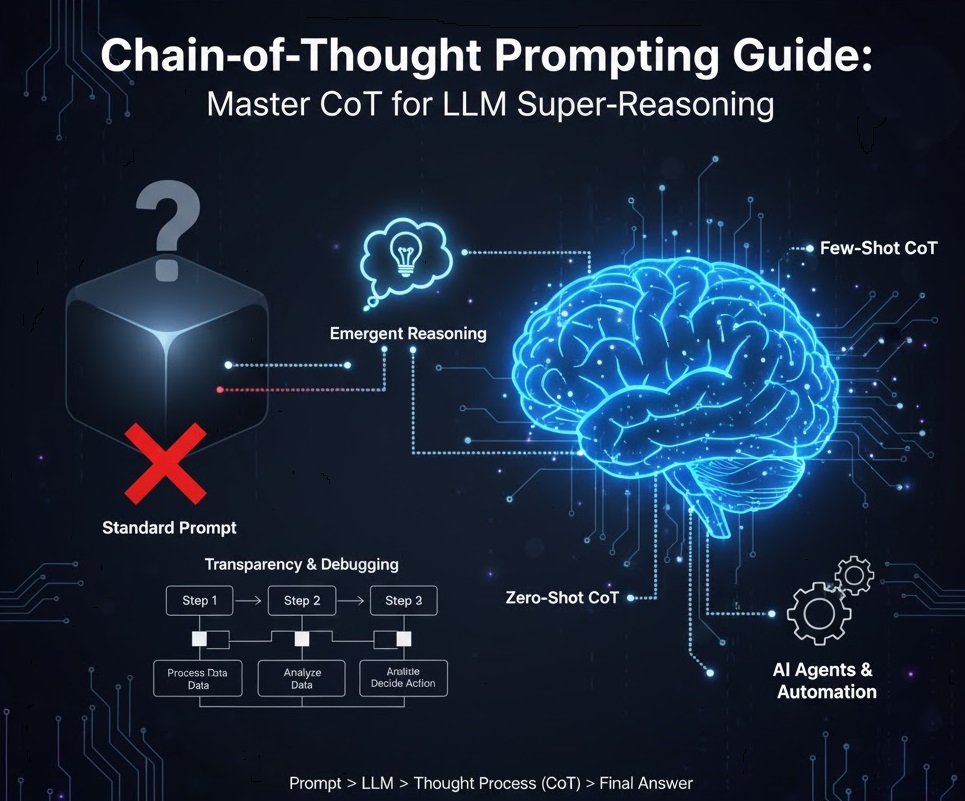

Chain-of-Thought Prompting Guide: Master CoT for LLM Super-Reasoning

Large Language Models (LLMs) have revolutionized text generation, but have you noticed them stumble on complex logic or multi-step problems? It’s like asking a brilliant student for an answer without showing their work. They might get it right, but you don’t know how, and they’re more prone to errors when the problem gets tough. This is the LLM’s reasoning dilemma. It can stuck on a specific problem anytime. In today’s AI revolutionary era, Prompt Engineering has become a most in demand skill for developers and business owners. LLM’s is totally dependent on the prompt you are giving to it. In...

Recent Comments